We would use PythonOperator based tasks.Ĭode for DAG using PythonOperator: Run the Pipeline

This means we’ll have to specify tasks for pieces of our pipeline and then arrange them somehow. Apache Airflow is based on the idea of DAGs (Directed Acyclic Graphs). This code will help us to arrange the extract, transform and load tasks in a DAG workflow format and help us to create a pipeline. We shall do the xcom_pull to get the transformed cats data and save it to a CSV file. Here, we have created a dummy function to do xcom_pull which will get the data and transform it to our requirements.

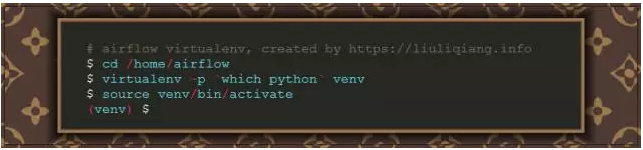

Xcom_push will save the fetched results to Airflow’s database. So we would use the requests library and get the JSON response. In this example, we would be extracting the data about some of the facts about cats from catfact.ninja API. We would write a python script for extracting, transforming, and loading (ETL) data and running the data pipeline that we have created.Ĭreate a Python file in dags/cats_ pipeline.py. Make sure to put them inside the dags folder (you’ll have to create it first), as that’s where Airflow will try to find them Coding the Pipeline

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed